What Is Financial Correctness Monitoring — and Why Uptime Metrics Miss It

By Abhijeet Batsa, Founder at Vellix.io | Ex Paytm Money, Snapdeal, Rakuten

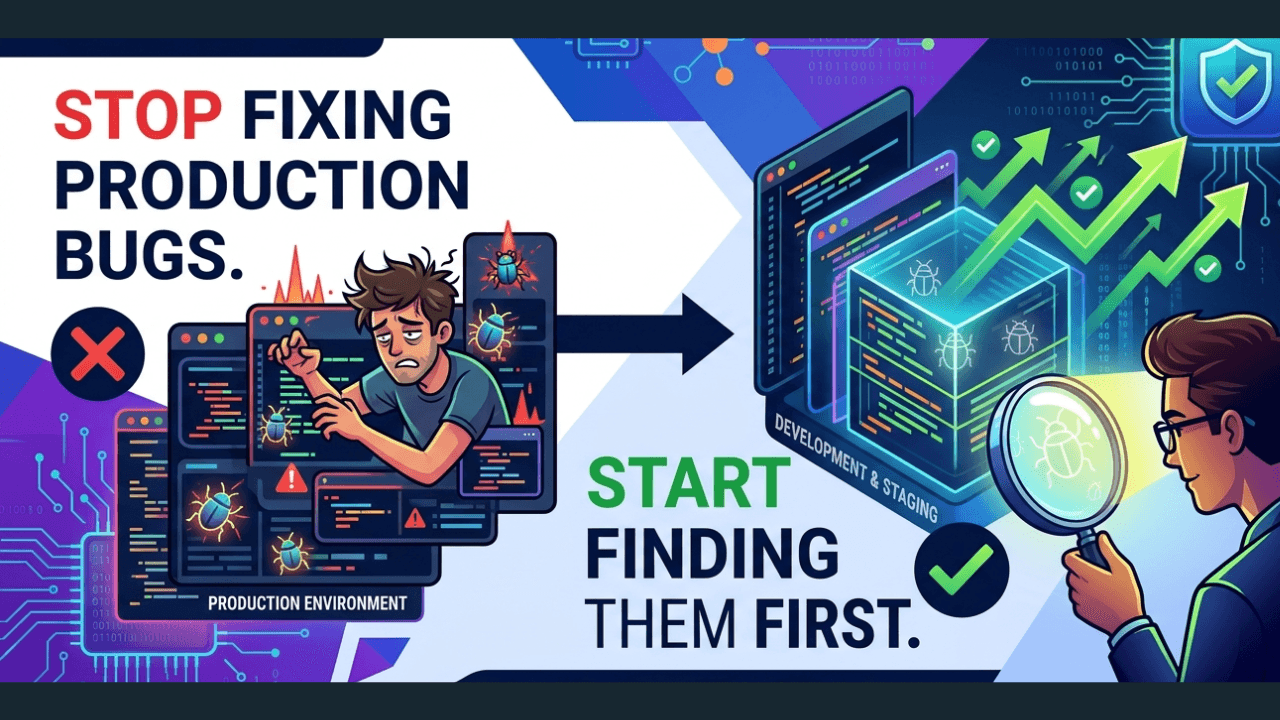

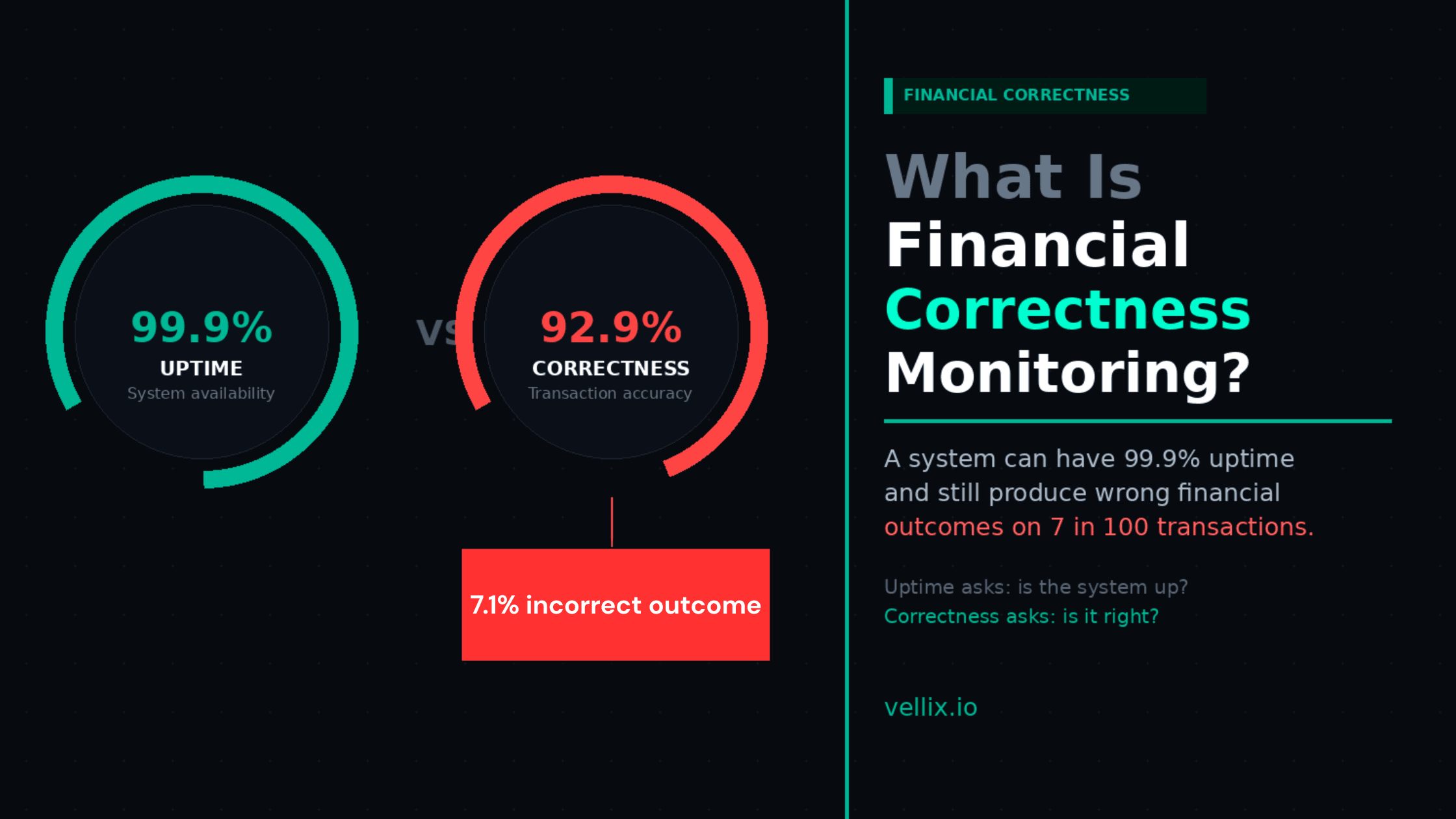

A payments platform I worked with had 99.9% uptime for eight consecutive months. Their SLA was met. Their monitoring dashboards were green. Their P0 incident count was zero.

In month nine, they discovered that 7.1% of their transactions had been producing incorrect outcomes for eleven weeks.

Not system failures. The infrastructure was running fine. Payments were processing and completing. But 7 in every 100 transactions had product logic errors: wrong fee tiers applied, incorrect state transitions recorded, settlement amounts that didn't match initiated amounts by small but real margins.

The system was up. The product was wrong.

This distinction — between a system being available and a system being correct — is what financial correctness monitoring addresses. And it's a gap that standard reliability engineering completely misses.

What Uptime Monitoring Measures (and What It Doesn't)

Traditional reliability monitoring — whether you call it SRE, observability, or just DevOps monitoring — focuses on a specific set of signals:

Availability: Is the system responding to requests?

Latency: How fast is it responding?

Error rate: What percentage of requests are returning errors?

Throughput: How many requests is it handling?

These are the "four golden signals" of SRE, and they are genuinely important for operational reliability. But they answer the question "is the system up?" They don't answer "is the system right?"

A payment that processes at the wrong fee tier doesn't produce an error. It produces a 200 OK. Latency is normal. Throughput is normal. All four golden signals are green.

The product is wrong. Your monitoring doesn't see it.

The Financial Correctness Gap

Financial products have a property that most software doesn't: their correctness is measurable in money. A wrong outcome isn't just a degraded user experience — it's a specific rupee amount that went somewhere it shouldn't have, or didn't go somewhere it should have.

This creates a class of bugs unique to fintech:

Silent incorrect outcomes: The transaction completes. The user receives a confirmation. But the underlying amounts, fees, or routing are wrong. Nobody knows until a user complains, a reconciliation report flags it, or an audit finds it.

Accumulating small errors: A rounding error of ₹0.01 per transaction sounds trivial. At 100,000 transactions per day, it's ₹1,000 per day, ₹30,000 per month, ₹3.6L per year — and it's showing up somewhere in your books as an unreconciled difference.

Compliance-relevant logic failures: A KYC edge case that assigns the wrong risk profile. An AML threshold that triggers (or doesn't trigger) based on a miscalculated running total. A regulatory reporting flag that fires on incorrect criteria. These aren't just product bugs — they're regulatory exposure.

Cascading state errors: A transaction that gets recorded in the wrong state creates downstream consequences. Settlement fails. Reconciliation mismatches. Customer service escalations. The original 200 OK was the start of a problem that took three teams two days to unwind.

What Financial Correctness Monitoring Actually Is

Financial correctness monitoring is the practice of continuously validating that the outcomes your financial system produces match the outcomes it was designed to produce.

In practice, it has three layers:

Layer 1: Transaction Outcome Validation

At the transaction level: does the settled amount match the initiated amount (after applying the correct fees, exchange rates, and rules)?

This isn't the same as a settlement report. A settlement report tells you what happened. Transaction outcome validation tells you whether what happened was correct.

Implementation: for every completed transaction, automated logic checks that:

Fee applied = fee rule for this transaction type and amount tier

Settlement currency and amount = expected amount after conversion at the recorded rate

State machine final state = expected state given the transaction path taken

Idempotency key was honoured (no duplicate processing)

Layer 2: Product Logic Regression Monitoring

At the product rule level: as your codebase changes, are your business rules still being applied correctly?

Every code deployment is a potential introduction of product logic regression. A fee calculation function refactored for performance might produce different results at edge amounts. A KYC flow updated for a new compliance requirement might break the re-attempt path. A payment routing rule change might affect transactions that weren't in the intended scope.

Product logic regression monitoring runs your core business rule assertions against production data after each deployment. Not synthetic tests — real transaction data. It surfaces when a deployment caused a product logic change that wasn't intended.

Layer 3: Reconciliation-Layer Monitoring

At the financial record level: do your internal records match the payment processor records, bank records, and ledger?

Reconciliation at most fintech companies is a manual, periodic process — often done by a finance team member running a report once per day or once per week. By the time a discrepancy is found, it's been compounding for 24–168 hours.

Automated reconciliation-layer monitoring closes this window. It runs the reconciliation check continuously — or at minimum, after every batch of settlements — and alerts immediately when internal records diverge from external records.

Why This Is a Product Engineering Problem, Not a Finance Problem

The instinct is to assign financial correctness to the finance team. They do reconciliation. They check the numbers. They file the reports.

The problem: by the time finance finds a discrepancy, the product has already caused it. The engineering decisions that produced the incorrect outcome — the fee calculation logic, the state machine implementation, the refund routing rules — were made months ago. The finance team is discovering symptoms; the cause is in the code.

Financial correctness monitoring belongs in the engineering layer because that's where the outcomes are produced. Finance reconciliation is a lagging indicator. Product logic monitoring is a leading one.

In practice, this means:

Engineering owns the automated transaction outcome validation

QA engineers include financial correctness assertions in their test suites (not just functional correctness)

Deployments are gated on product logic regression checks, not just unit test pass rates

Monitoring dashboards show financial correctness metrics alongside uptime metrics

The Metrics That Actually Matter for Financial Products

If you're measuring the right things, your monitoring dashboard for a fintech product should include:

Standard reliability metrics (already exist):

Uptime / availability

API latency (p50, p95, p99)

Error rate by endpoint

Financial correctness metrics (usually missing):

Incorrect outcome rate: % of completed transactions where the outcome differed from the expected outcome based on business rules

Reconciliation mismatch rate: % of transactions where internal records don't match processor records within the settlement window

Rule regression count: number of business rule assertions that failed after the last deployment

Mean time to detection (MTTD) for product logic errors: how long between a logic error being introduced and being detected

The 7.1% incorrect outcome rate from the example above? It was invisible on their uptime dashboard. It would have been the headline metric on a financial correctness dashboard.

How to Start: A Practical 3-Step Implementation

You don't need to build a full financial correctness monitoring system overnight. Three steps, in order:

Step 1: Instrument your reconciliation layer. Before anything else, add automated settlement reconciliation that runs daily. Compare your internal transaction records against your payment processor settlement files. Log every discrepancy. Alert if the discrepancy rate exceeds a threshold. This alone will surface most production financial errors within 24 hours instead of weeks.

Step 2: Add business rule assertions to your test suite. For your top three revenue-critical flows, write explicit test assertions for the financial outcomes — not just the API responses. "Fee applied to a ₹500 transaction should be ₹12.50, not ₹12.49 or ₹12.51." These assertions catch logic regressions during development, before they reach production.

Step 3: Add post-deployment validation. After each production deployment, run a automated check that replays recent real transactions against the expected business rules. If the rule application changes after a deployment, surface it immediately.

The Question That Drives This

The right question for a fintech engineering team isn't "is the system up?"

It's "is the system right?"

A system that's up but wrong is worse than a system that's down. Downtime is visible. Incorrect outcomes are silent. Downtime is measured in minutes. Incorrect outcomes accumulate in rupees.

Financial correctness monitoring is the discipline of measuring the right question.

Vellix provides financial correctness monitoring as part of its AI reliability platform for fintech — including automated reconciliation-layer validation, product logic regression monitoring, and RCA report generation. vellix.io | support@vellix.io

Abhijeet Batsa is the founder of Vellix.io and FuturestaQ. 16 years at Paytm Money ($4B AUM), Snapdeal, Rakuten Viki Singapore.