Every Type of Software Testing Explained — And Where AI Fits In

By Abhijeet Batsa, Founder of Vellix.io | 10 min read

Software testing has more categories, subcategories, and frameworks than most engineers care to learn. This post cuts through the noise: what each type of testing actually does, when to use it, and how AI-powered test generation fits into a modern QA strategy.

The Two Fundamental Divisions

Before the subcategories, there are two axes that define all software testing:

Functional vs Non-Functional

Functional testing: Does the software do what it is supposed to do?

Non-functional testing: How well does it do it? (speed, reliability, security, usability)

Manual vs Automated

Manual testing: A human executes test cases

Automated testing: A script or tool executes test cases

AI-powered: An AI generates or executes test cases

Almost every type of testing can be performed manually or automated. The decision of which to automate depends on repeatability, volume, and criticality.

Functional Testing Types

Unit Testing

What it is: Testing individual functions, methods, or components in isolation.

Who does it: Developers, during development.

When to use it: Every time a function is written or modified.

Tools: Jest, JUnit, pytest, NUnit.

Where Vellix fits: Vellix can generate unit test scenarios for API functions — edge cases and boundary conditions that developers typically miss.

Integration Testing

What it is: Testing how different modules or services interact with each other.

Who does it: Developers or QA engineers.

When to use it: When multiple services or components need to work together — especially when integrating with third-party APIs.

Tools: Postman, REST Assured, Karate.

The fintech angle: Integration testing is where most fintech failures originate — the interface between your system and a payment gateway, bank API, or KYC vendor. This is where the "it works in isolation, breaks in combination" problem lives.

System Testing

What it is: Testing the entire application as a single integrated system.

Who does it: QA engineers.

When to use it: Before release, after major feature additions.

Tools: Selenium, Playwright, Cypress (for UI); Postman collections (for API).

End-to-End (E2E) Testing

What it is: Testing complete user journeys from start to finish, including all system components.

Who does it: QA engineers.

When to use it: For critical user flows — checkout, onboarding, authentication, payment. Tools: Playwright, Selenium, Cypress.

The fintech angle: E2E testing in fintech must include the full transaction lifecycle — not just "did the button work" but "did the money move correctly and did both systems agree on the state."

Regression Testing

What it is: Re-running existing tests after code changes to ensure nothing previously working has broken.

Who does it: QA engineers (automated, typically).

When to use it: After every deployment or significant code change.

Why it matters: In fast-moving fintech teams, regressions in payment flows are the most common source of production incidents. Automated regression on critical flows is non-negotiable.

Smoke Testing

What it is: A quick check of the most critical functions after a new build — "does the app start, can users log in, can a basic transaction complete?"

Who does it: QA engineers or automated pipeline.

When to use it: Immediately after every deployment, before deeper testing begins.

Sanity Testing

What it is: Focused testing on specific functionality after a bug fix or minor change.

Who does it: QA engineers.

When to use it: After a targeted fix, to verify the fix works without re-running the full regression suite.

User Acceptance Testing (UAT)

What it is: Testing by end users or business stakeholders to verify the product meets business requirements.

Who does it: Business users, product managers, clients.

When to use it: Before final release, especially for client-facing features.

The misconception: UAT is not a replacement for QA. It is the final gate, not the only gate. A product that reaches UAT with critical functional defects has failed QA — not UAT.

Non-Functional Testing Types

Performance Testing

What it is: Evaluating how the system behaves under various load conditions.

Subtypes:

Load testing: Normal expected load

Stress testing: Beyond normal capacity, to find the breaking point

Spike testing: Sudden large increase in load

Soak testing: Sustained load over a long period Tools: JMeter, k6, Gatling, Locust. The fintech angle: Payment systems face predictable peak loads — salary dates, festival seasons, IPO subscription windows. Stress testing these flows before the peak is essential.

Security Testing

What it is: Identifying vulnerabilities and security weaknesses.

Subtypes: Penetration testing, vulnerability scanning, authentication testing.

Tools: OWASP ZAP, Burp Suite.

The fintech angle: In regulated fintech products, security testing is not optional. PCI-DSS compliance, RBI guidelines, and SEBI regulations all require demonstrable security testing.

Exploratory Testing

What it is: Unscripted, human-driven testing where the tester simultaneously learns the product, designs tests, and executes them.

Who does it: Experienced QA engineers.

When to use it: For new features, edge case discovery, and finding issues that scripted tests miss.

Why it matters: This is the one type of testing that AI cannot replace. Real users behave unpredictably, and an experienced tester who thinks like a malicious or confused user will find things no automated suite will.

Usability Testing

What it is: Evaluating how easy and intuitive the product is to use.

Who does it: UX researchers, QA engineers, real users.

Tools: UserTesting, Hotjar, session recordings.

Accessibility Testing

What it is: Verifying the product is usable by people with disabilities.

Standards: WCAG 2.1 compliance.

Tools: Axe, WAVE, Lighthouse.

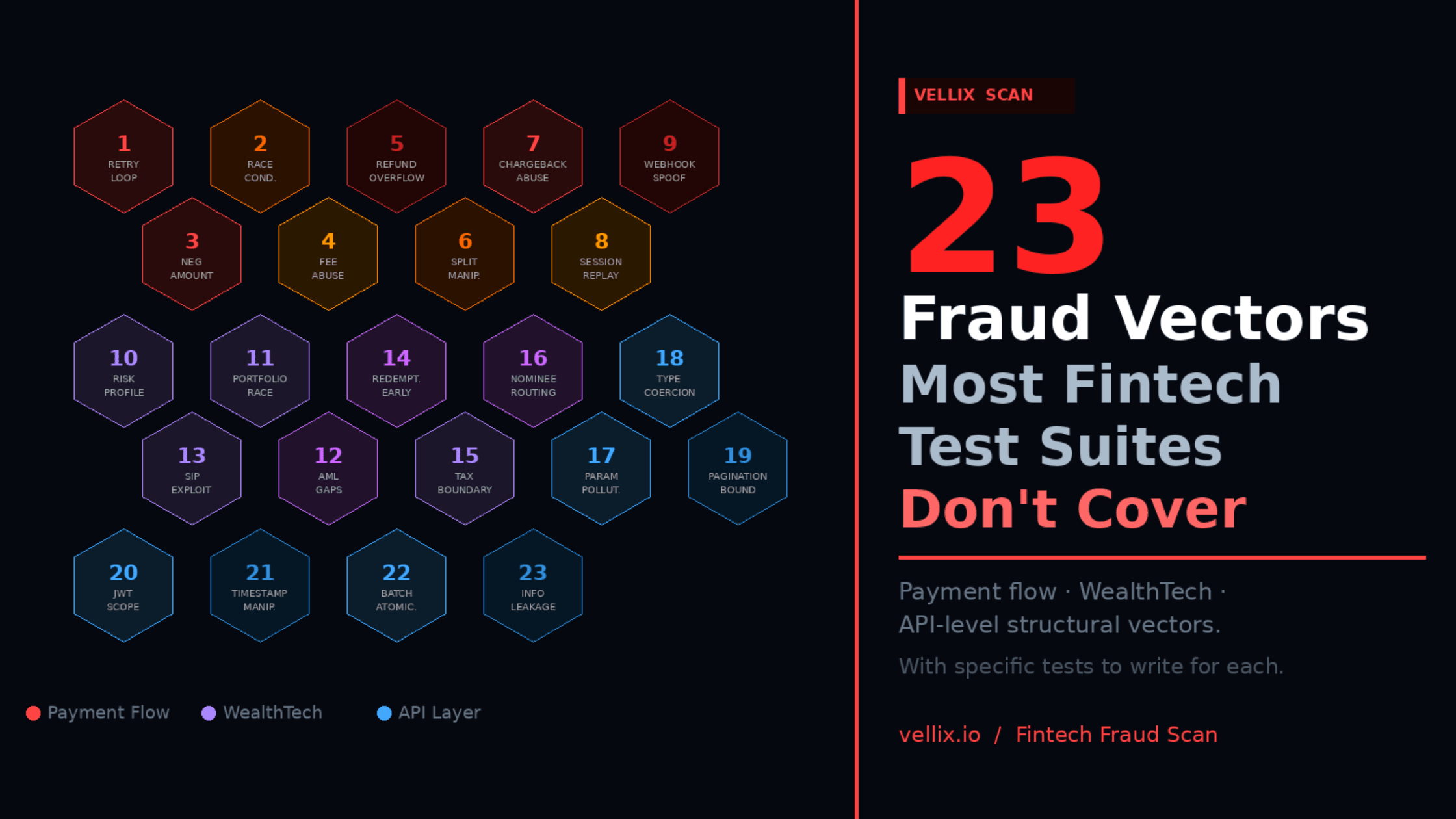

Testing Specific to Fintech

These testing types are standard across software but become critical in financial products:

API Contract Testing

Verifying that the API response matches the documented contract — data types, field names, required fields. Critical when integrating with banking partners where an undocumented field change can break a payment flow.

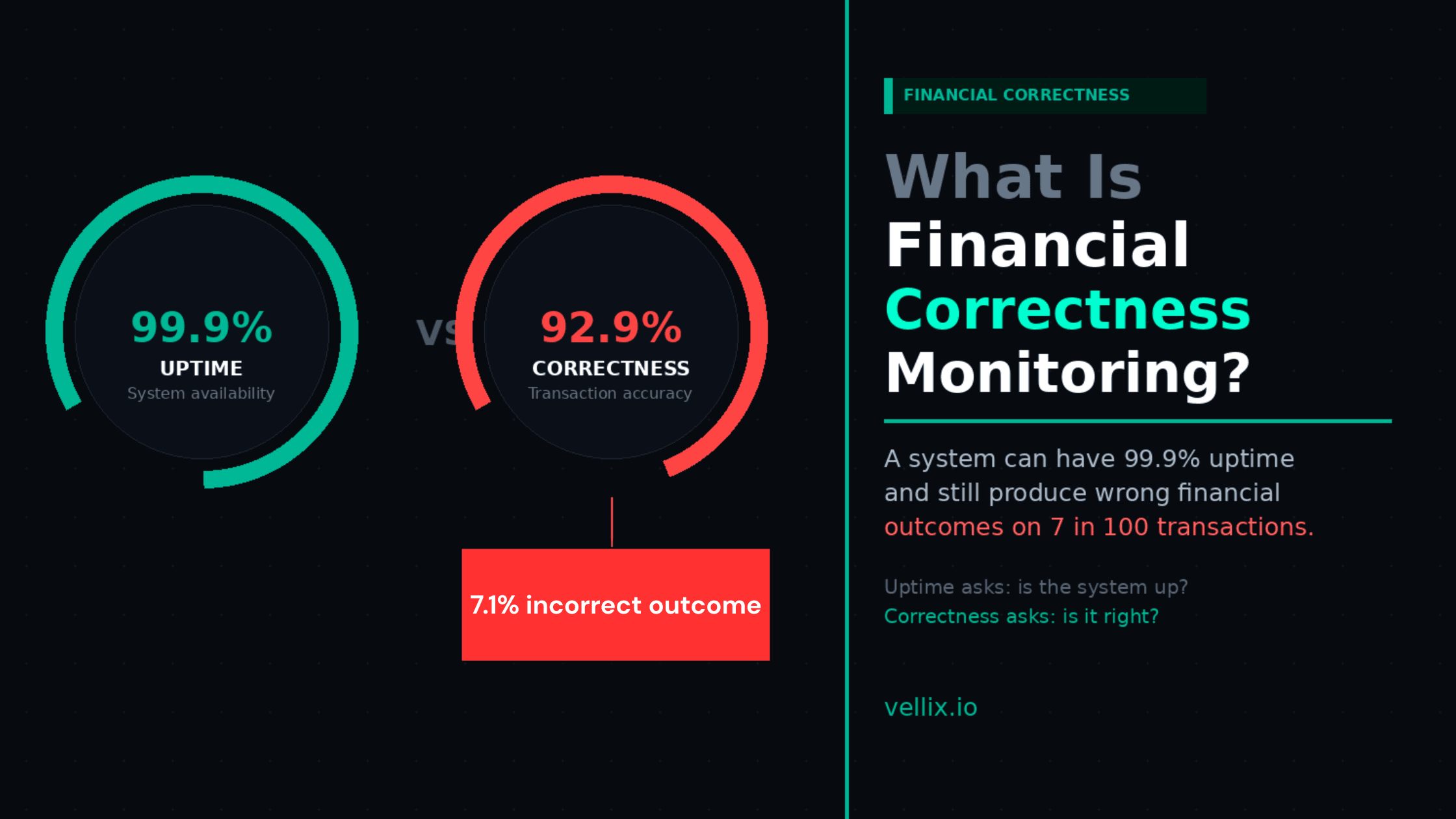

Financial Correctness Testing

The most important and least common type of testing in fintech. Verifying that amounts, calculations, and financial state are correct — not just that the API returned a 200 OK. This includes settlement reconciliation, ledger balance checks, and rounding validation.

Idempotency Testing

Verifying that duplicate requests (retry after timeout) produce the same result rather than creating duplicate transactions. One of the most common sources of real-money production bugs in payment systems.

State Machine Testing

Verifying that a transaction, order, or financial object transitions through allowed states correctly and cannot reach an invalid state. A payment that transitions from "pending" to "failed" to "completed" represents a corrupt state machine.

Where AI-Powered Test Generation Fits

Traditional test generation requires a QA engineer to manually think through each scenario. This works for obvious cases but systematically misses:

Domain-specific edge cases requiring specialized knowledge

Combination failures (three things going wrong simultaneously)

Scenarios that only occur at scale or under specific timing conditions

AI-powered test generation — specifically Vellix for fintech — addresses this by producing test scenarios from a domain knowledge base rather than from the tester's individual expertise.

What AI does well:

Generating comprehensive scenario coverage from an API specification

Producing fintech-specific failure modes (payment edge cases, financial calculation boundaries)

Scaling test coverage without scaling headcount

What AI does not do:

Replace exploratory testing — human creativity and malicious thinking are still essential

Run the tests — AI generates scenarios; your existing framework executes them

Monitor production — generation is not monitoring

The modern QA strategy uses all three:

Manual / exploratory: Human testers for new features, UX validation, creative edge case discovery

Automated: Scripts for regression, performance, and critical path validation

AI-generated: Scenario expansion for domain-specific coverage, edge case discovery, test case generation at scale

Each plays a different role. None replaces the others.

A Practical Testing Pyramid for Fintech Teams

For a fintech team with limited QA resources, here is the priority order:

Automated smoke tests on all critical flows — runs on every deployment

AI-generated API tests for payment, KYC, and settlement endpoints — run on every PR

Manual exploratory testing of new features before release

Performance tests for payment flows before peak seasons

UAT with product team before major releases

Security testing on a quarterly cadence

This covers the most critical bases with the least overhead.

Abhijeet Batsa is the founder of Vellix.io and FuturestaQ. He spent 16 years building payment and investment infrastructure at Paytm Money, Snapdeal, and Rakuten Viki.